I Read the Leaked Claude Code Source — Here's What I Found

Today, the source code for Claude Code — Anthropic’s AI-powered CLI tool — leaked online. Naturally, I did what any curious developer would do.

I read it. All of it.

And what I found is genuinely fascinating. This isn’t some weekend hack or a thin wrapper around an API. Claude Code is one of the most sophisticated terminal applications I’ve ever seen — and it’s hiding some wild engineering decisions under the hood.

Let me walk you through the highlights.

The Numbers

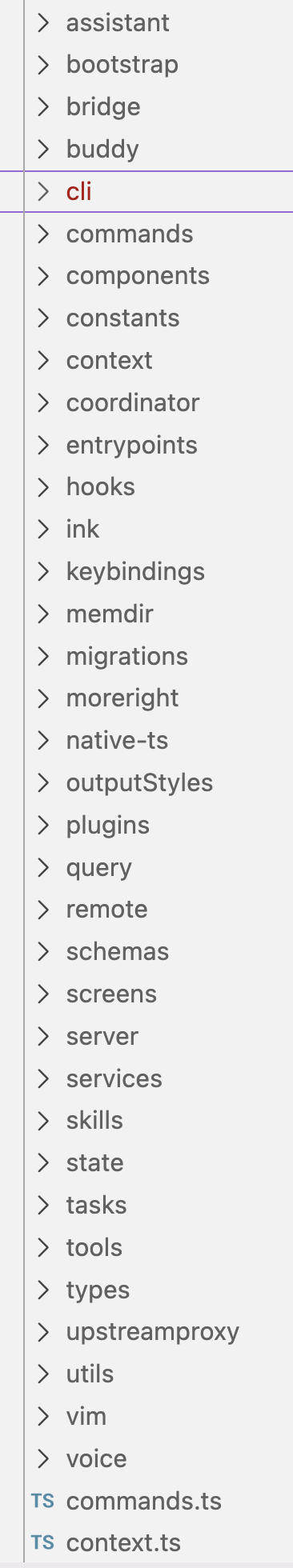

The leaked source tree. 35 top-level modules, from

The leaked source tree. 35 top-level modules, from assistant to voice.

First, let’s set the stage:

- ~512,000 lines of TypeScript

- 1,884 files across 35 top-level modules

- 33 MB of source code

- 80+ built-in tools (Read, Write, Edit, Bash, Agent, etc.)

main.tsxalone is 803 KB

This is not a small project. This is a production-grade agentic system that happens to live in your terminal.

They Built Their Own Terminal UI Framework

This is the one that made me sit up.

The ink/ directory — roughly 50 files — is not the popular npm ink package. Anthropic built their own React-based terminal rendering engine from scratch.

And it’s not a toy. It includes:

- A pure TypeScript port of Meta’s Yoga flexbox layout engine — no C++ bindings, no native dependencies. Flexbox. In your terminal.

- A custom React reconciler that goes from Fiber → DOM → Layout → Screen Buffer → Terminal output

- Mouse tracking with hit-testing (yes, you can click things in Claude Code)

- Text selection with word and line boundaries

- OSC 8 hyperlinks — clickable links in the terminal

- Terminal capability detection for Kitty, iTerm2, xterm.js, and multiplexers

Why build all this instead of using the existing ink package? My guess: performance and control. When your terminal app is running an AI agent that streams tokens in real-time while executing tools concurrently, you need tight control over rendering. Off-the-shelf solutions probably couldn’t keep up.

The Dual-Track Permission System

Security in Claude Code isn’t an afterthought — it’s deeply embedded in the architecture. And the approach is clever.

Track 1: Rules-based fast path. Pattern matching against tool inputs — file paths, shell commands — with glob and regex support. Three tiers: always-allow, always-deny, always-ask. This handles the obvious cases quickly.

Track 2: ML classifier. For ambiguous cases — like a bash command that might be destructive — Claude Code calls the Claude API itself to classify the risk. The AI evaluates whether a command is dangerous before letting you run it.

There’s also symlink traversal protection, so you can’t trick the tool into escaping its sandbox by creating symlinks to sensitive paths. And the permission system sits in a 62 KB file (utils/permissions/filesystem.ts) — it’s one of the largest single modules in the codebase.

An AI that uses itself to decide if its own actions are safe. We’re living in interesting times.

Streaming Tool Execution

Most people assume Claude Code works like this:

- Send prompt to API

- Wait for full response

- Parse out tool calls

- Execute tools

- Repeat

But that’s not what happens. Claude Code uses a StreamingToolExecutor that starts executing tools as the API streams them in. The moment a complete tool-use block arrives, execution begins — even if the model is still generating more tool calls.

And tools marked as isConcurrencySafe run in parallel. So while Claude is still thinking about what to do next, your first three file reads are already finishing.

Results are buffered and emitted in the order they were requested, keeping the conversation coherent even though execution is out-of-order. It’s a small detail that makes the whole experience feel significantly faster.

Feature Gates and Dead Code Elimination

The codebase is littered with compile-time feature flags:

KAIROS— a proactive agent modeCOORDINATOR_MODE— multi-worker distributionVOICE_MODE— voice inputPROACTIVE— autonomous agent behavior

These aren’t runtime flags. They’re Bun’s dead-code elimination gates. At build time, entire features are stripped from the binary. This means Anthropic can ship different builds with different capabilities from the exact same source tree.

It also means the leaked source contains features that may never have shipped to users. COORDINATOR_MODE, for instance, implements a multi-worker system that distributes agent tasks across CPU cores — one worker per logical CPU. It’s sitting right there in the coordinator/ directory, but it’s gated off in standard builds.

The Agent Spawning System

The AgentTool is where things get really interesting. When Claude Code spawns a sub-agent, it has three isolation modes:

- In-process — shared memory, same context

- Worktree — creates a temporary

git worktree, giving the child agent its own sandboxed copy of the repository - Remote — runs in a cloud environment (coordinator mode)

The worktree approach is particularly elegant. Instead of complex filesystem virtualization, they just leverage Git’s built-in worktree feature to give each agent an isolated copy of the codebase. Simple, reliable, and the cleanup is automatic.

There’s even a SendMessage tool for agents to communicate with each other via a shared mailbox — a lightweight multi-agent coordination protocol.

Tool Results Go to Disk

Here’s a practical detail I appreciated: large tool results aren’t kept in memory. They’re persisted to disk with deferred loading.

When you ask Claude Code to read a massive file or run a command that produces pages of output, that content gets written to a temp file. The conversation holds a reference, not the content. This prevents memory bloat during long sessions where you might be reading dozens of files and running hundreds of commands.

The Two-Layer Query Engine

The brain of Claude Code is split into two files:

QueryEngine.ts(1,295 lines) — the high-level agentic loop. Manages retries, budget tracking, permission checks, and the overall flow of “ask model → execute tools → repeat.”query.ts(1,729 lines) — the low-level orchestration. Assembles system prompts, manages message history, executes hooks, handles streaming.

This separation is smart. The outer loop handles strategy (should we retry? are we over budget? does the user need to approve this?). The inner loop handles mechanics (how do we actually call the API, parse the response, and run the tools?).

Budget tracking is granular — per-model, per-session, tracking both tokens and dollars, with enforcement mid-conversation.

MCP (Model Context Protocol) Integration

Claude Code is a first-class MCP client. The services/mcp/ directory handles:

- Connecting to MCP servers via stdio, SSE, or WebSocket

- Auto-loading server configs from

~/.claude/mcp.json - Dynamic OAuth flows for authenticated servers

- Tool discovery and normalization

- Rate limiting and caching

Every MCP tool gets wrapped in an MCPTool adapter that makes it look like a native Claude Code tool. From the model’s perspective, there’s no difference between a built-in tool and one provided by an MCP server.

Session Persistence and Resume

Full message history plus metadata is serialized to disk. You can resume a conversation exactly where you left off — all context preserved, all tool results available.

This isn’t just “save chat history.” The serialization includes permission states, MCP connections, cost tracking data, and in-progress task states. It’s a full checkpoint/restore system for an agentic session.

Prompt Caching

When you read a large file in Claude Code, that content gets tagged for API-level prompt caching. If you (or a sub-agent) read the same file again later in the conversation, the API serves the cached version — no extra tokens charged for the repeated content.

This is a meaningful cost optimization. In a typical coding session, the same files get read multiple times as context accumulates. Without caching, that would burn through your token budget fast.

What This Tells Us

Looking at the source code as a whole, a few things stand out:

The investment is massive. Half a million lines of TypeScript with custom UI frameworks, ML-powered security, multi-agent coordination, and streaming execution pipelines. This isn’t a side project — it’s a core product with serious engineering behind it.

The architecture assumes agents will be long-running and autonomous. Budget tracking, session persistence, disk-based result storage, cost enforcement — these are features you build when you expect an agent to run for extended periods without supervision.

Security is treated as a first-class concern. The dual-track permission system, symlink protection, and per-tool concurrency safety flags show that Anthropic is thinking carefully about what happens when an AI agent has access to your filesystem and shell.

The multi-agent future is already being built. The coordinator mode, agent spawning with worktree isolation, and inter-agent messaging aren’t shipping features — but they’re fully implemented in the source. Anthropic is clearly preparing for a world where multiple AI agents collaborate on tasks simultaneously.

Final Thoughts

I’ve been using Claude Code daily for months, and I had no idea how much complexity was hiding behind that clean terminal interface. The custom Yoga port alone would be a notable open-source project. The streaming tool executor is elegant. The permission system is genuinely novel.

Is it perfect? No. The 803 KB main.tsx suggests some build optimization choices that prioritize startup speed over code organization. And the feature gate system, while clever, means the public build is a subset of what the source can do.

But as a piece of software engineering, it’s impressive. Anthropic didn’t just build a chatbot that can run commands. They built a full agentic runtime with its own rendering engine, security model, and multi-agent coordination layer.

And they did it all in TypeScript. In a terminal. Running on Bun.

What a time to be alive.

Disclaimer: This analysis is based on leaked source code. I have no affiliation with Anthropic, and this post is purely for educational and technical discussion purposes. If you work at Anthropic and want this taken down, just ask.